Ground-View Event Camera-Based Velocity Estimation Enabled by Spiking Neural Networks for Ground Robots

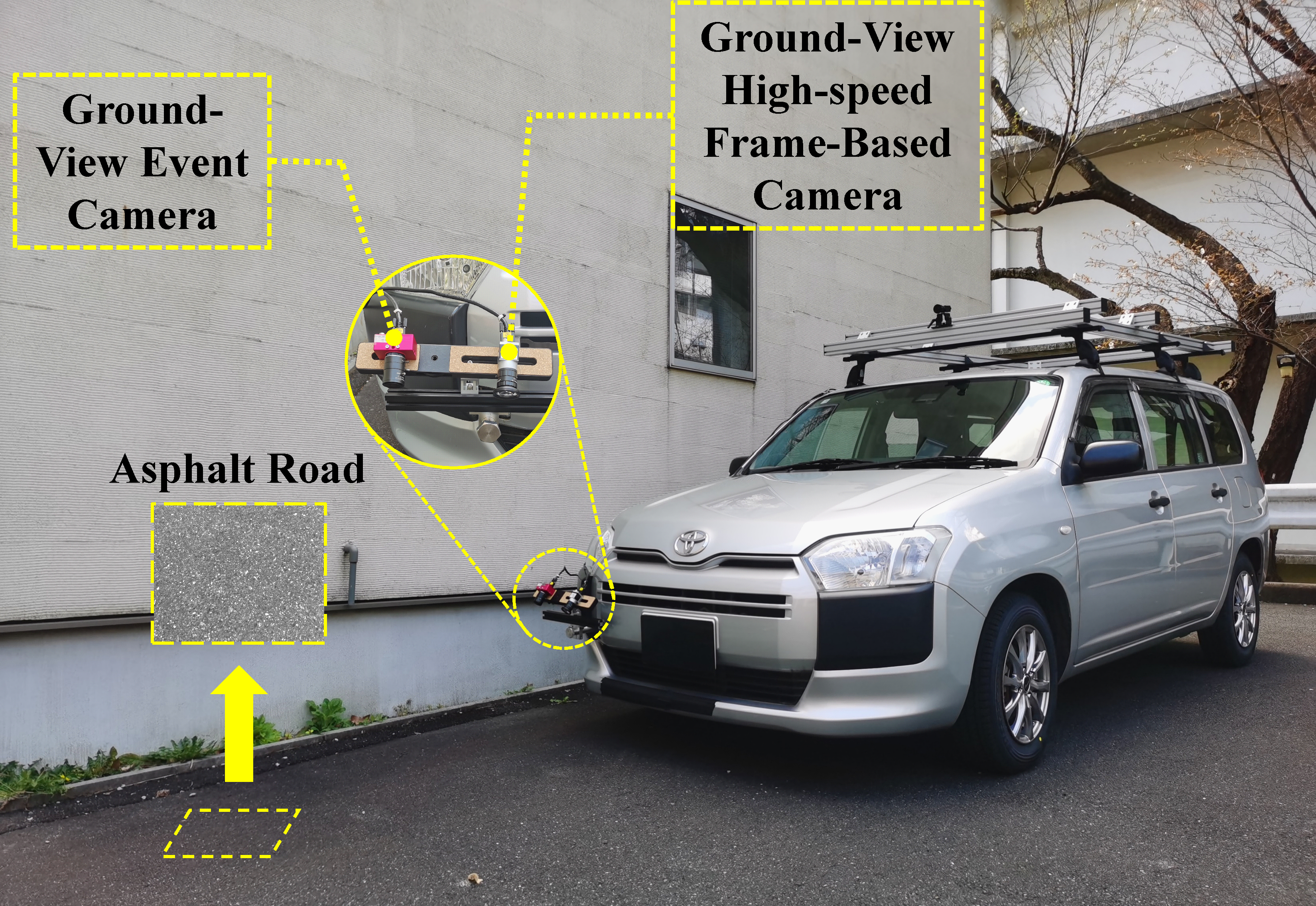

Ground-view event camera mounted on a real vehicle for outdoor velocity estimation.

Abstract

Accurate velocity estimation plays a key role in ensuring precise motion control and safe navigation of ground robots. Ground-view event cameras provide a reliable sensing modality for this task: they exploit the static and stable nature of the ground, together with the sensor's high temporal resolution, to extract motion cues robustly even under challenging conditions such as scene dynamics, low light, or high dynamic range.

This paper proposes a novel velocity estimation method for ground robots using a ground-view event camera, whose application in a ground-looking configuration has received limited attention. We leverage a spiking neural network (SNN) to process event-based visual information directly, exploiting its intrinsic temporal dynamics to infer motion from ground texture event streams and eliminating the need for long-term event accumulation. To address the challenge of costly hyperparameter tuning associated with the conventional loss function, we propose a novel geometric loss function that automatically balances translation and rotation by using the differences between event coordinates warped by the predicted and the ground-truth motion.

Experiments on both synthetic and real-world data demonstrate that the proposed method not only handles diverse ground textures and variations in the camera's pose relative to the ground, but also generalizes well to real-world scenarios even when the network is trained exclusively on synthetic data.

Method

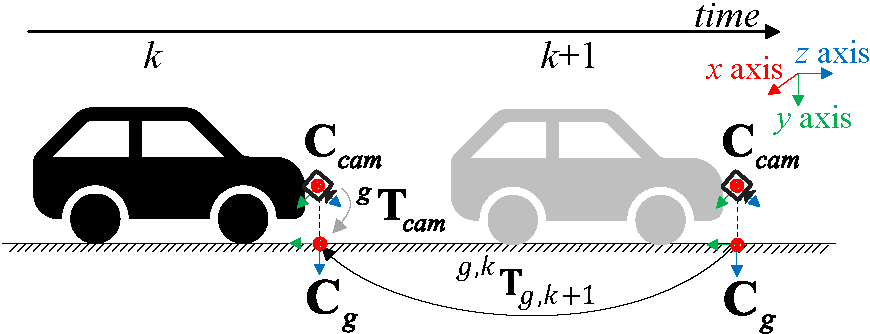

We mount an event camera in a ground-looking configuration on a mobile robot and directly predict its 3-DoF planar motion (xg, yg, φg) from short event streams. The geometry is illustrated below: Ccam is the event camera pose, Cg is its projection on the ground, gTcam is the extrinsic transform, and g,kTg,k+1 is the planar motion from time k to k+1 that we want to estimate.

Fig. 1 — Geometry of the problem. The vehicle icon is for illustration; the method applies to general ground robots.

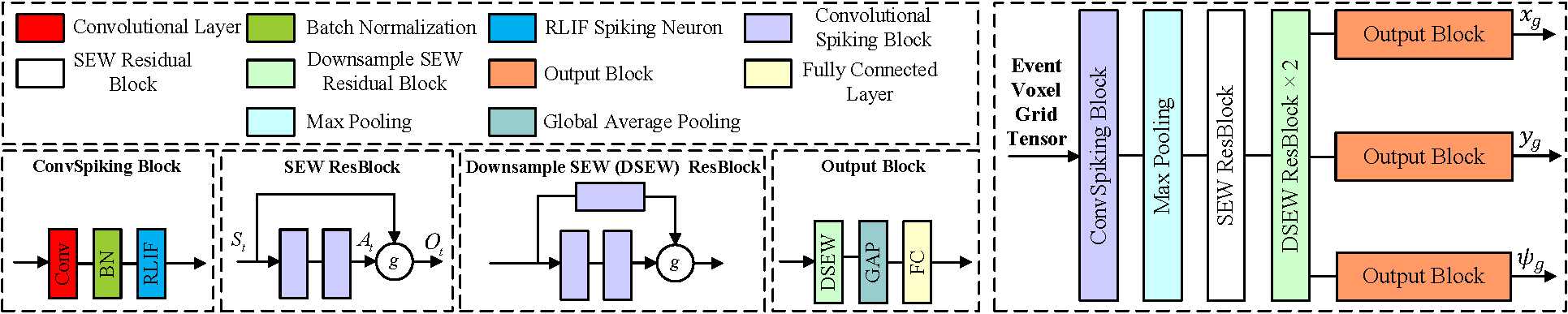

SNN architecture. Events within a fixed-length time window (we use 2 ms) are encoded into an event voxel grid and fed into a spiking network composed of a convolutional spiking block, one max-pooling layer, one SEW residual block, two downsample SEW (DSEW) residual blocks, and three output branches that regress xg, yg, φg respectively. The recurrence of output spikes and the membrane potential of LIF/RLIF neurons together carry past sequential information, so the network can predict motion without accumulating events over long periods.

Fig. 2 — SNN architecture: ConvSpiking + SEW ResBlock + DSEW ResBlock × 2 + three Output Blocks for xg, yg, φg.

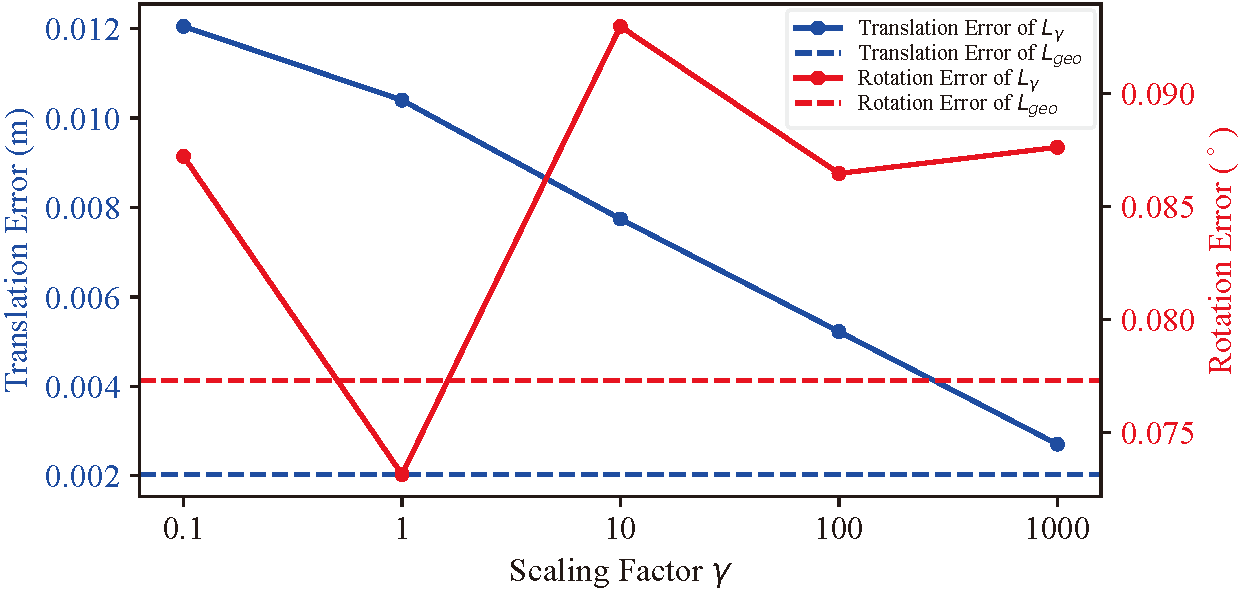

Geometric loss. A naive RMSE loss requires a hand-tuned scaling factor γ to balance translation and rotation, because their numerical scales differ by orders of magnitude. We instead select events within the time window, warp them with homography matrices computed from predicted motion and ground-truth motion respectively, and use the squared difference of their warped coordinates as the loss. Translation and rotation are jointly and automatically weighted by the geometry of the ground surface itself, removing the need for γ tuning.

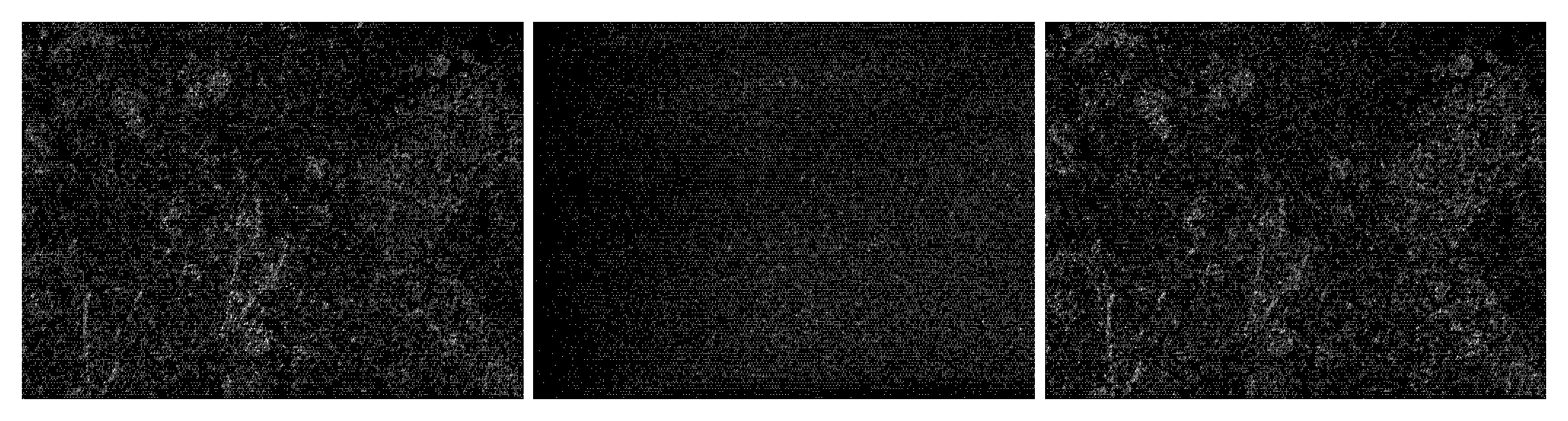

Fig. 3 — Warped event images. Left: ground-truth motion (sharp texture). Middle: untrained SNN (fails to warp). Right: SNN trained with the proposed Lgeo (recovers a sharp ground image).

Why γ tuning is not enough. The figure below sweeps the scaling factor γ in the weighted RMSE loss Lγ: translation error decreases monotonically as γ grows, while rotation error is minimized near γ = 1. There is no single γ that minimizes both. The proposed Lgeo (dashed horizontal lines) achieves the lowest translation error and a rotation error close to the minimum — without any tuning.

Fig. 4 — Translation and rotation errors versus scaling factor γ; dashed lines show Lgeo (no scaling factor).

Results

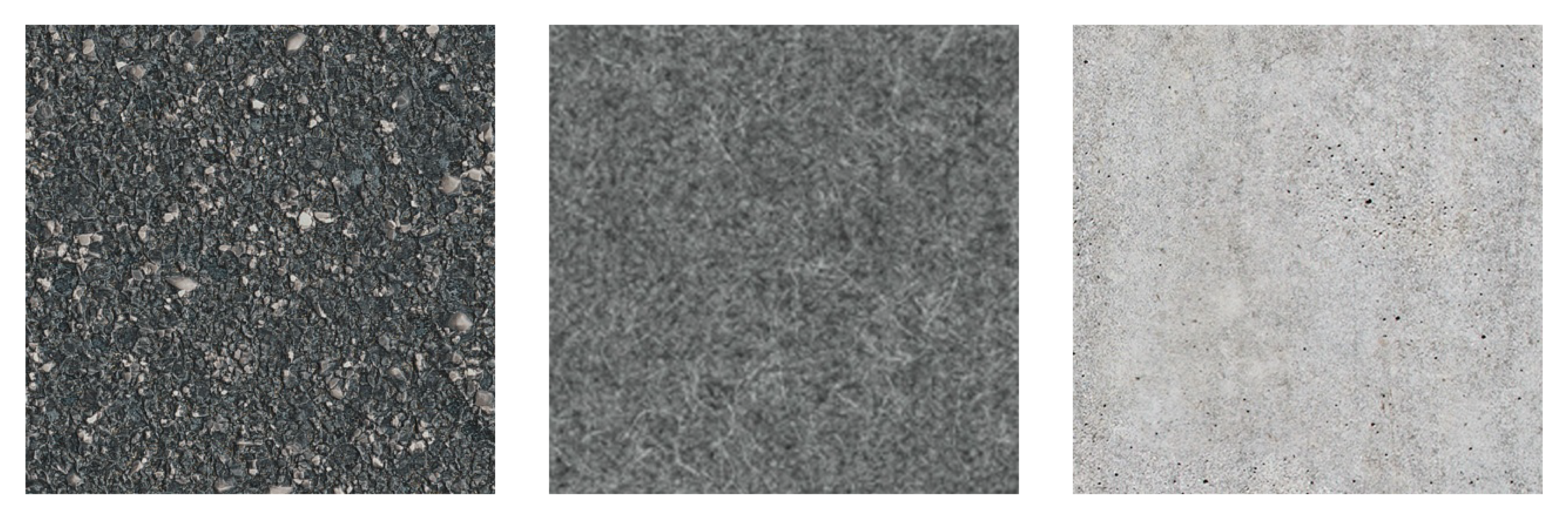

We trained the SNN only on synthetic data generated with the ESIM event simulator on asphalt textures, then evaluated on (i) synthetic data covering three ground textures (asphalt / carpet / concrete), (ii) a real-world indoor handcart on carpet, and (iii) a real vehicle outdoors on asphalt.

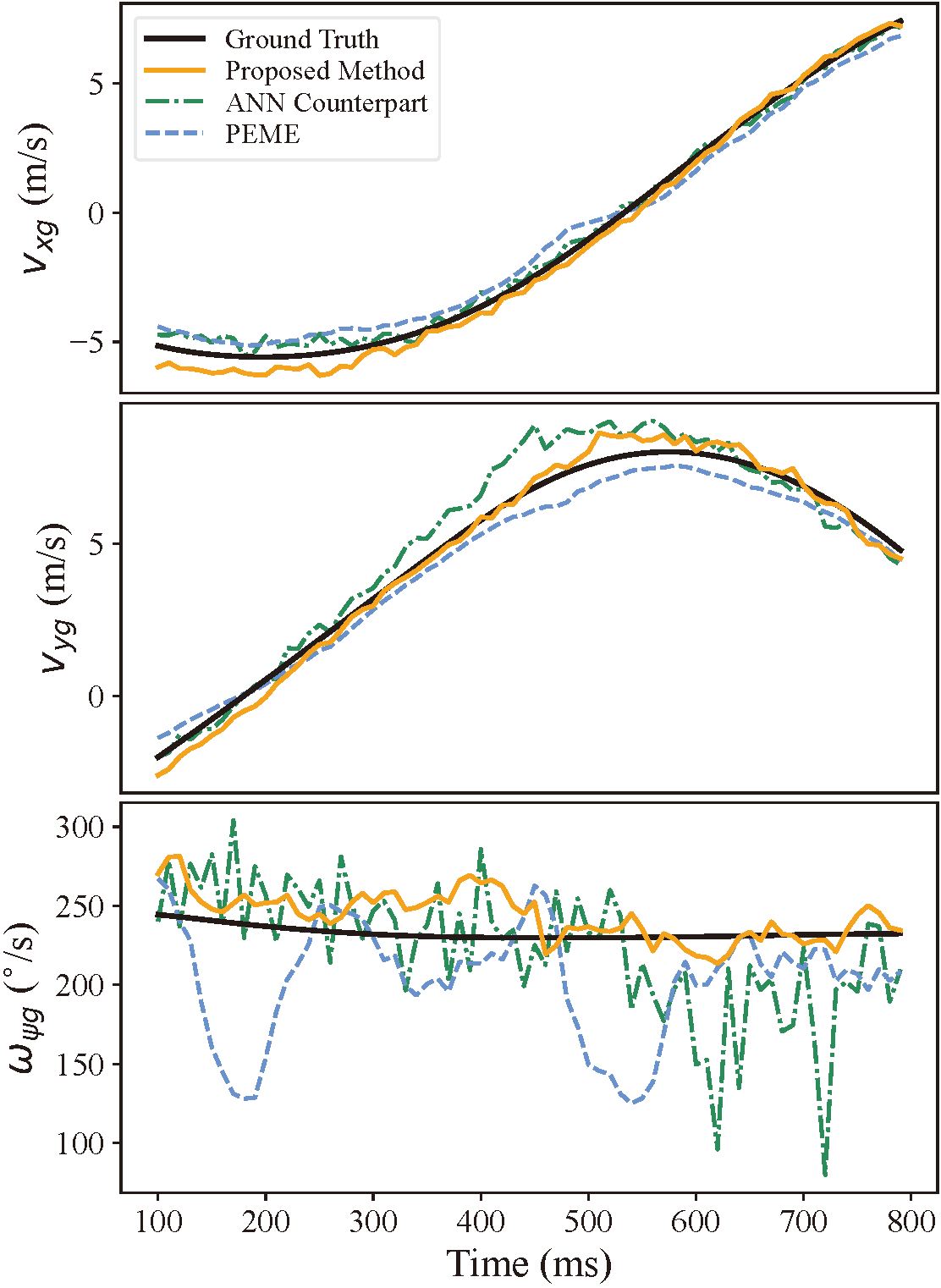

Synthetic test set. The table below reports RMSEs for linear velocities vxg, vyg [m/s] and angular velocity ωφg [°/s], compared against the PEME baseline and an ANN counterpart trained on the same data with the same overall architecture.

| Method | Component | Asphalt | Carpet | Concrete |

|---|---|---|---|---|

| Proposed | vxg (m/s) | 0.3998 | 0.3697 | 0.4488 |

| vyg (m/s) | 0.5358 | 0.5902 | 0.6522 | |

| ωφg (°/s) | 25.17 | 30.81 | 29.76 | |

| ANN Counterpart | vxg | 0.6339 | 0.5206 | 0.3830 |

| vyg | 0.6068 | 0.7196 | 0.3124 | |

| ωφg | 43.50 | 44.48 | 36.85 | |

| PEME [28] | vxg | 0.5548 | 0.7256 | 0.7163 |

| vyg | 0.5251 | 0.7685 | 0.6945 | |

| ωφg | 31.38 | 41.26 | 36.54 |

Table 1 (excerpt) — RMSEs on synthetic data over three ground textures. Bold = lowest in column.

Across all three textures the proposed method keeps linear velocity RMSE below 0.66 m/s and angular velocity RMSE below 31°/s, despite being trained only on asphalt. Note that PEME's results are computed with the ground-truth camera-to-ground pose, whereas the proposed method predicts velocity with absolute scale directly.

Fig. 5 — Three different ground surface textures in the test set: (a) asphalt, (b) carpet, (c) concrete.

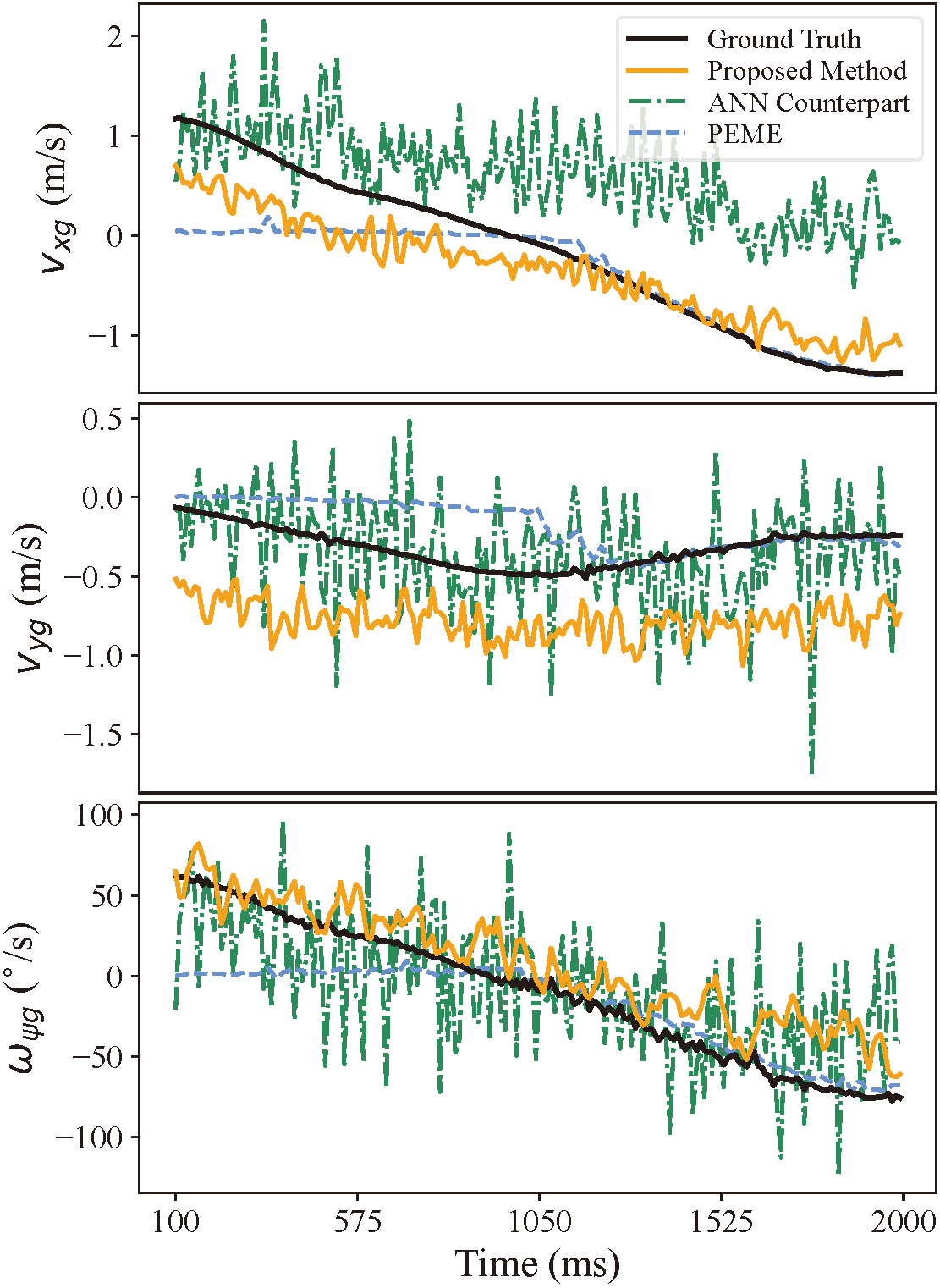

Fig. 6 — Estimated vxg, vyg, ωφg on a synthetic sequence. The proposed method tracks the ground truth more tightly than PEME and the ANN counterpart, especially for angular velocity.

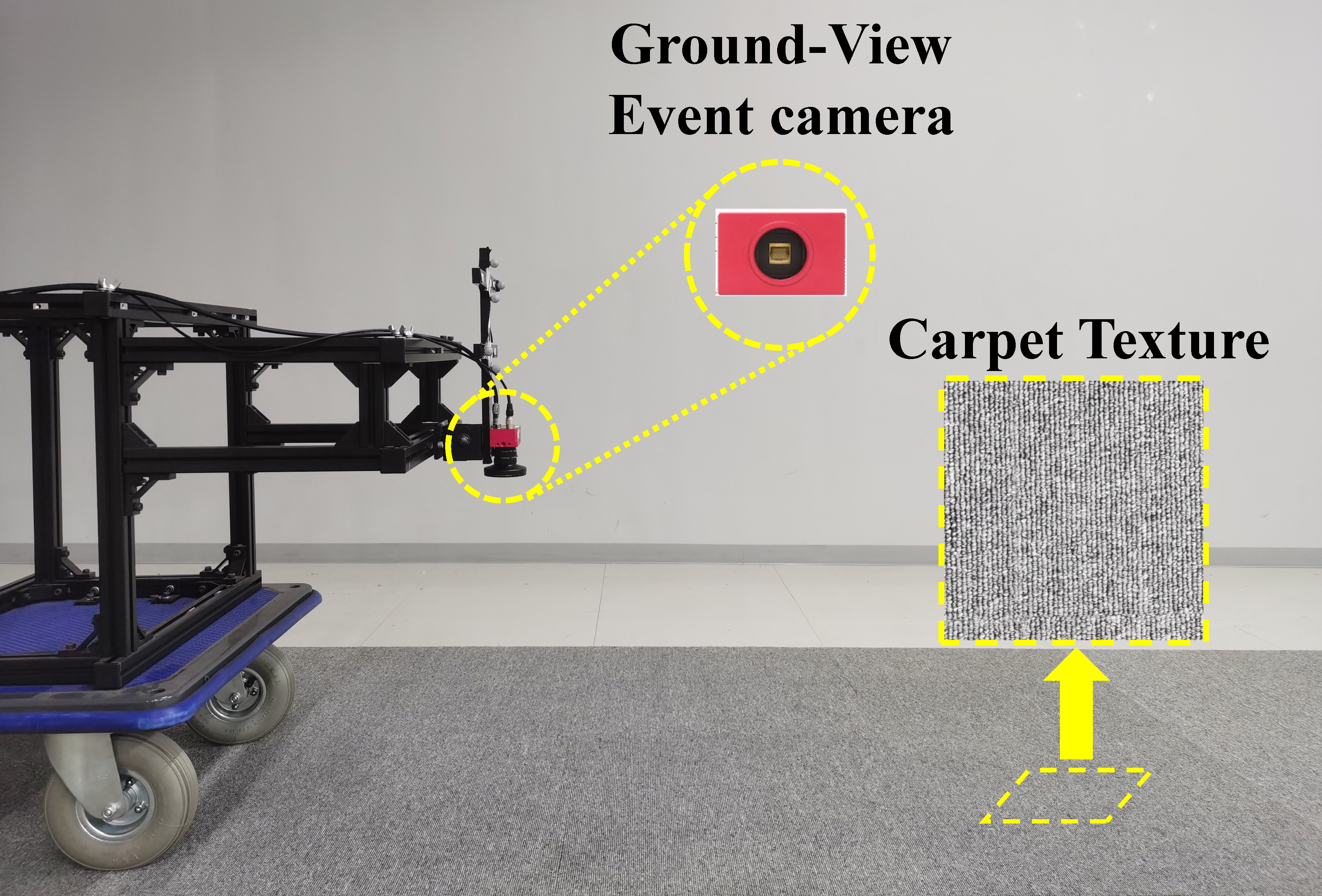

Real-world indoor: handcart on carpet. We pushed a handcart with a DAVIS346 event camera along random trajectories on carpet, capturing 108 sequences with OptiTrack ground truth. The network was not fine-tuned — it still operates on weights trained on synthetic asphalt only.

Fig. 7 — Indoor data collection setup: handcart with a ground-view event camera over carpet.

Fig. 8 — Indoor real-world results. The proposed method achieves linear-velocity RMSE below 0.35 m/s and angular-velocity RMSE below 18.5°/s, despite training only on synthetic asphalt.

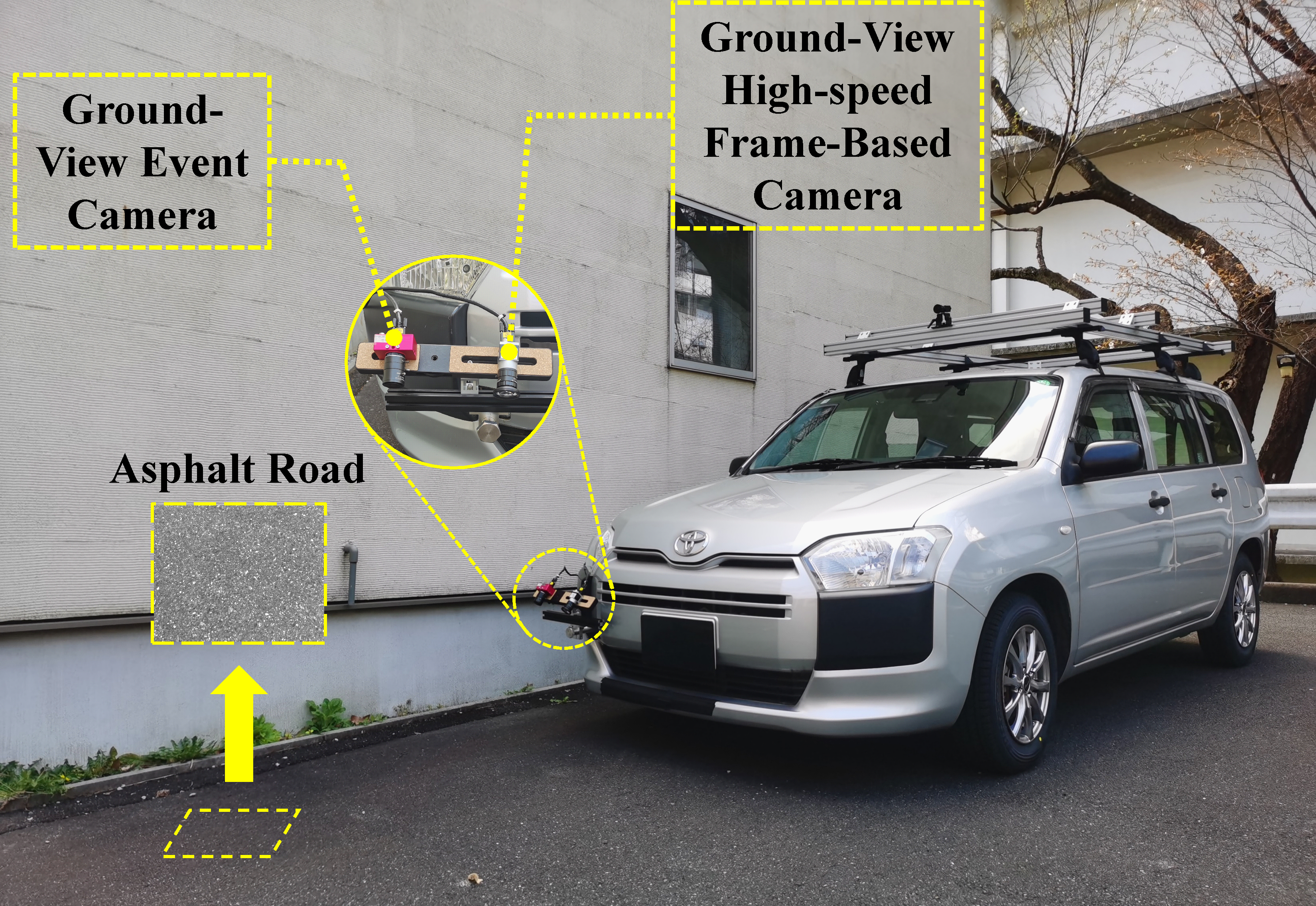

Real-world outdoor: vehicle on asphalt. We mounted the event camera together with a 500 Hz frame-based reference camera on a real vehicle and drove on an asphalt road. The frame-based stream provides reference motion via high-speed vision odometry (HSV-odometry); the event camera operates ground-view, downward-facing.

Fig. 9 — Real-vehicle outdoor setup: ground-view event camera + ground-view high-speed frame-based reference camera.

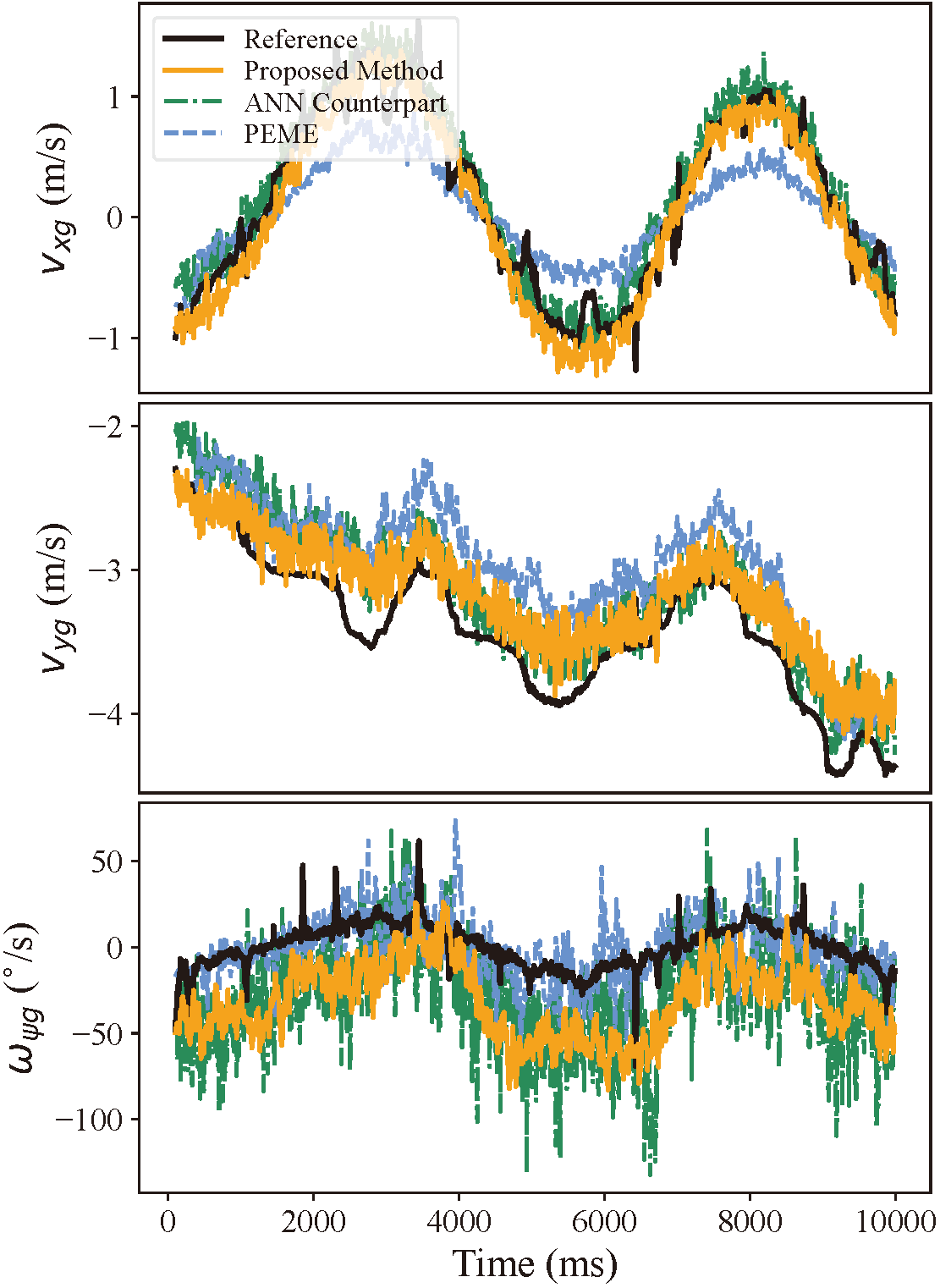

Fig. 10 — Outdoor real-vehicle results. The proposed SNN matches the reference velocity closely; PEME degrades noticeably on vxg.

Robustness to camera-to-ground pose error. A key advantage of our method is that, unlike PEME, it does not require knowledge of the camera-to-ground pose at inference time. To stress this, we injected a 5° perturbation to pitch/roll and 0.05 m to height. The proposed method's RMSEs remain unchanged, while PEME's vyg RMSE roughly doubles (0.39 → 0.77 m/s).

| Method | Component | without pose error | with pose error |

|---|---|---|---|

| Proposed | vxg | 0.2077 | 0.2077 |

| vyg | 0.4535 | 0.4535 | |

| ωφg | 44.71 | 44.71 | |

| PEME [28] | vxg | 0.2930 | 0.3205 |

| vyg | 0.3935 | 0.7739 | |

| ωφg | 13.83 | 15.79 |

Table 3 — Outdoor real-vehicle RMSEs with and without injected camera-to-ground pose error.

Energy efficiency. Following standard 45 nm CMOS energy estimates (EMAC = 5.1 × EAC), a synaptic-operation analysis indicates that the proposed SNN is 8.18× more energy-efficient than its ANN counterpart with the same overall architecture — while delivering equal or better accuracy on most evaluated components.

Citation

@article{su2026groundview,

author = {Su, Junzhe and Hirano, Masahiro and Yamakawa, Yuji},

title = {Ground-View Event Camera-Based Velocity Estimation Enabled by

Spiking Neural Networks for Ground Robots},

journal = {IEEE Access},

volume = {14},

pages = {64935--64948},

year = {2026},

doi = {10.1109/ACCESS.2026.3686315}

}