Detection-and-Tracking-Oriented Roadside Camera Placement via Observation-Augmented Bayesian Optimization

Abstract

This paper proposes a roadside camera placement method that directly maximizes detection and tracking performance via Bayesian optimization. Conventional geometric approaches model the sensing space explicitly and optimize sensor layouts to maximize spatial coverage; however, they typically assume homogeneous sensors and environments, and therefore cannot reflect the performance of the actual perception algorithms to be deployed, nor site-specific factors that affect sensing (e.g., low-angle sunlight). To address this limitation, we design an evaluation metric for a roadside camera system that is computed in simulation using a practical object detection and tracking pipeline, and we directly maximize the metric via Bayesian optimization. Furthermore, to reduce the impact of the computational cost dominated by simulation in Bayesian optimization, we propose to place more cameras than the intended number to augment observations that can be evaluated per simulation run, thereby significantly reducing the overall optimization time. Experiments demonstrate that our method successfully derives a camera placement with performance superior to the best placement designed by multiple human participants, while achieving more than a 4 times speedup compared to the optimization without observation augmentation.

Method

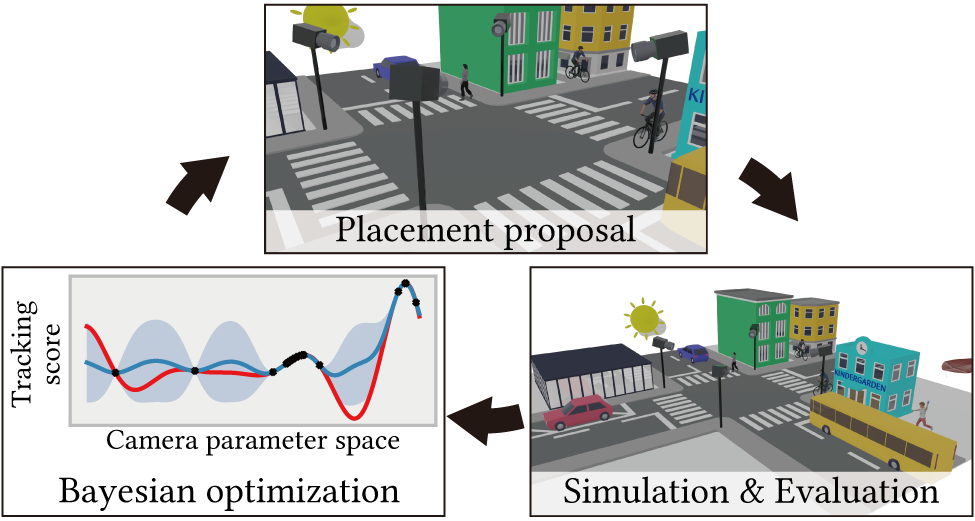

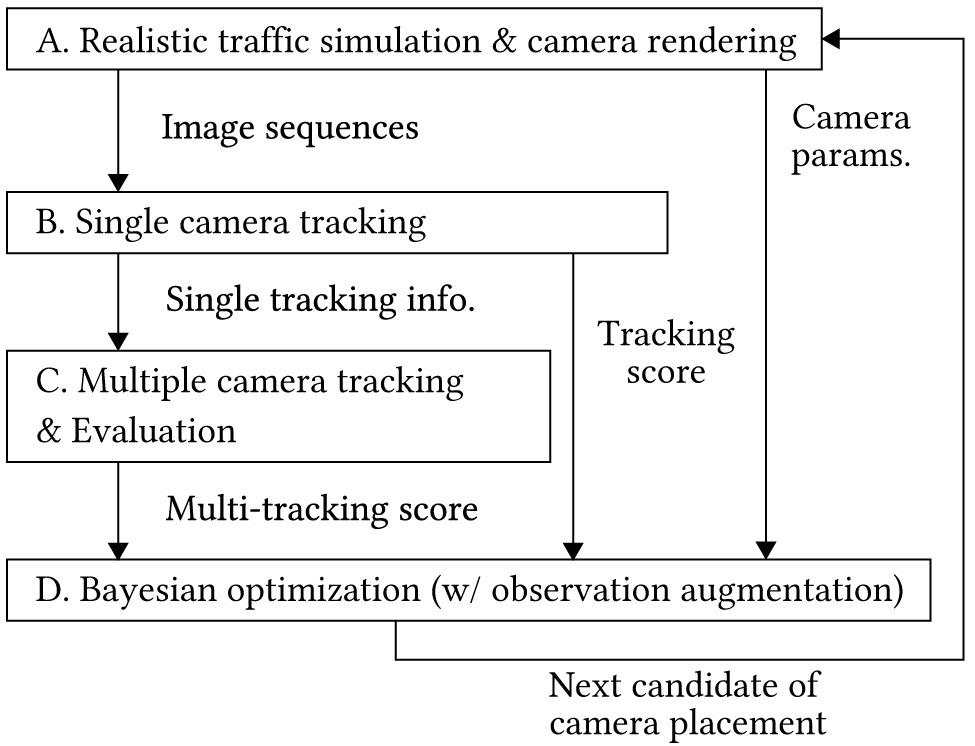

We formulate roadside camera placement as a black-box optimization problem and solve it via Bayesian optimization. Given a 3D digital twin reproducing the target environment, the framework (A) places candidate cameras and runs traffic simulation, (B) performs object detection and tracking on each camera stream, (C) integrates per-camera tracklets into a multi-camera tracking score, and (D) updates a Gaussian process surrogate to propose the next placement. By iterating this loop, we obtain placements that yield increasingly higher tracking performance.

Tracking-score-based objective. Unlike conventional coverage-based formulations, we directly evaluate detection and tracking performance using Multi-Camera Multi-Object Tracking (MCMOT). For each frame, a vehicle inside the target region is regarded as successfully tracked if it is captured by at least one camera. The multi-tracking score is defined as the ratio of tracked frames to the total dwell time of vehicles in the target region.

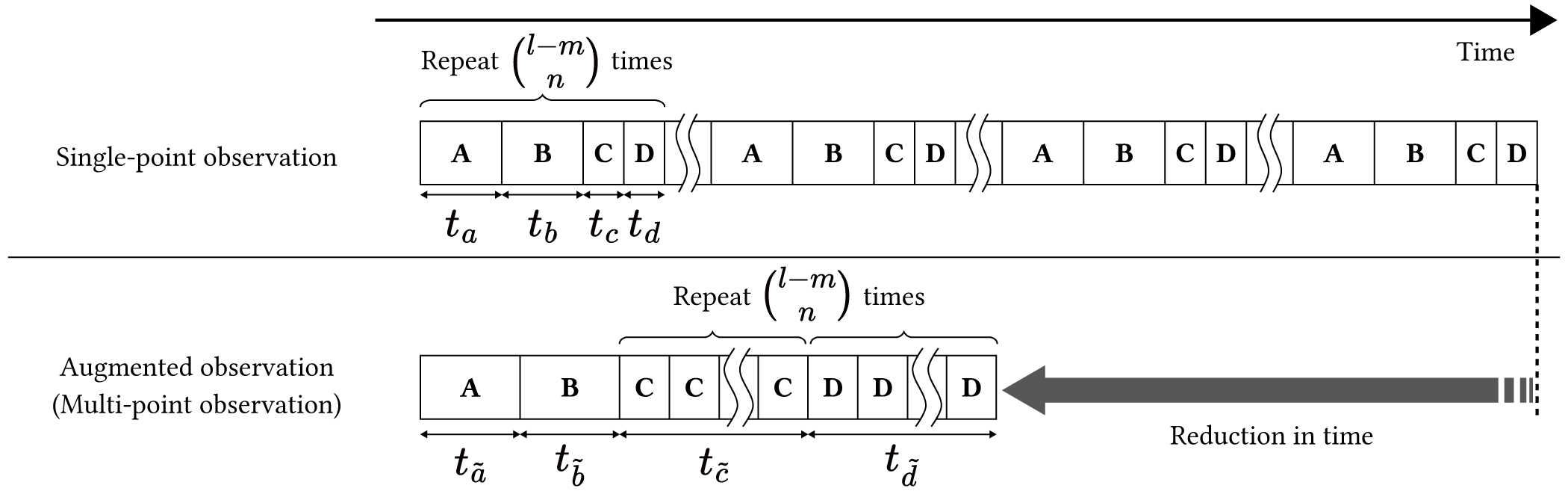

Observation Augmentation. In the Bayesian optimization loop, the simulation and per-camera tracking dominate the overall computation cost. To mitigate this bottleneck, we place more cameras than the planned number in a single simulation run, and evaluate the multi-tracking score for combinatorially many camera subsets. This produces multiple observations per simulation, drastically reducing the time required to gather a given number of observations.

Results

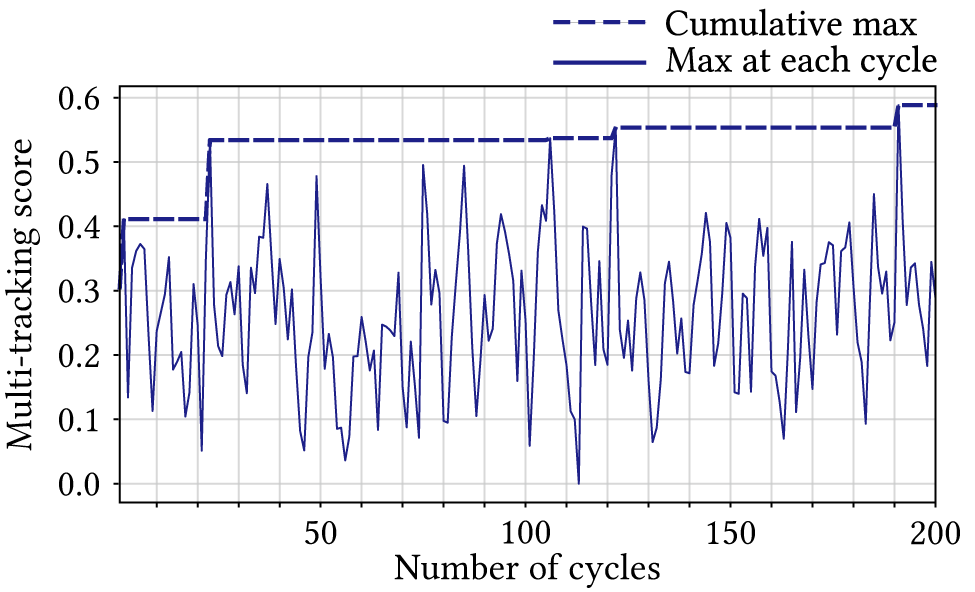

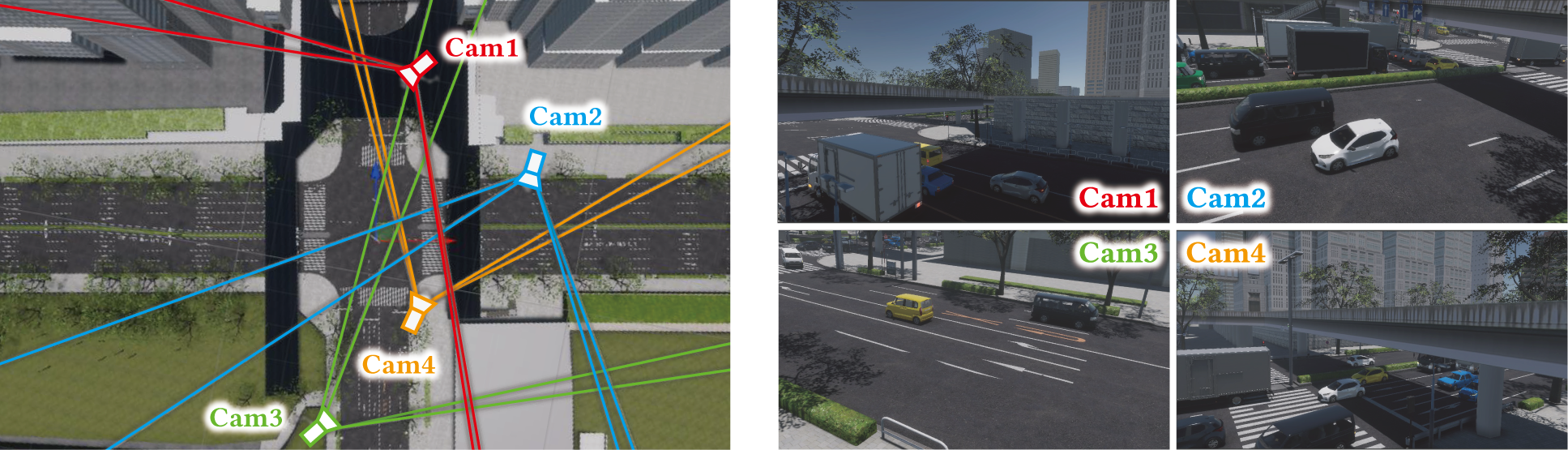

We evaluated our method on the AWSIM driving simulator with a digital twin of Nishi-Shinjuku, optimizing the placement of n = 4 cameras around an intersection over 200 cycles. The maximum multi-tracking score steadily improved as the optimization progressed, reaching 0.588 at cycle 192. For reference, even with extensive trial-and-error, human-designed placements only marginally exceed 0.6, which highlights the strength of the score automatically obtained by our method.

The resulting placement covers the intersection from complementary viewpoints with sufficient overlap to support robust cross-camera tracking.

Comparison with human experts. We compared the proposed method against placements designed by six participants specialized in computer vision. The proposed method outperforms the best human placement by approximately 15%, and the average by approximately 32%.

| Human Experts | Ours | |||||

|---|---|---|---|---|---|---|

| A | B | C | D | E | F | Proposed |

| 0.484 | 0.510 | 0.388 | 0.497 | 0.361 | 0.422 | 0.588 |

Multi-tracking scores. Bold among A–F indicates the best human placement.

Ablation on Observation Augmentation. We compared the time required to acquire 15 observations between single-point and multi-point observations. The proposed observation augmentation achieves more than a 4× speedup (17016 s → 4158 s).

Citation

@inproceedings{hirano2026bcp,

title={Detection-and-Tracking-Oriented Roadside Camera Placement via Observation-Augmented Bayesian Optimization},

author={Masahiro Hirano and Hayato Fujii and Yuji Yamakawa},

booktitle={Proceedings of 2026 IEEE 29th International Conference on Intelligent Transportation Systems (ITSC)},

year={2026}

}